By Katie Paul and Anna Tong

NEW YORK (Reuters) – At its peak in the early 2000s, Photobucket was the world’s top image-hosting site. The media backbone for once-hot services like Myspace and Friendster, it boasted 70 million users and accounted for nearly half of the U.S. online photo market.

Today only 2 million people still use Photobucket, according to analytics tracker Similarweb. But the generative AI revolution may give it a new lease of life.

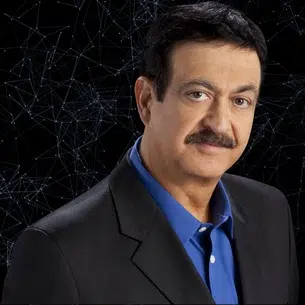

CEO Ted Leonard, who runs the 40-strong company out of Edwards, Colorado, told Reuters he is in talks with multiple tech companies to license Photobucket’s 13 billion photos and videos to be used to train generative AI models that can produce new content in response to text prompts.

He has discussed rates of between 5 cents and $1 dollar per photo and more than $1 per video, he said, with prices varying widely both by the buyer and the types of imagery sought.

“We’ve spoken to companies that have said, ‘we need way more,’ Leonard added, with one buyer telling him they wanted over a billion videos, more than his platform has.

“You scratch your head and say, where do you get that?”

Photobucket declined to identify its prospective buyers, citing commercial confidentiality. The ongoing negotiations, which haven’t been previously reported, suggest the company could be sitting on billions of dollars’ worth of content and give a glimpse into a bustling data market that’s arising in the rush to dominate generative AI technology.

Tech giants like Google, Meta and Microsoft-backed OpenAI initially used reams of data scraped from the internet for free to train generative AI models like ChatGPT that can mimic human creativity. They have said that doing so is both legal and ethical, though they face lawsuits from a string of copyright holders over the practice.

At the same time, these tech companies are also quietly paying for content locked behind paywalls and login screens, giving rise to a hidden trade in everything from chat logs to long forgotten personal photos from faded social media apps.

“There is a rush right now to go for copyright holders that have private collections of stuff that is not available to be scraped,” said Edward Klaris from law firm Klaris Law, which says it’s advising content owners on deals worth tens of millions of dollars apiece to license archives of photos, movies and books for AI training.

Reuters spoke to more than 30 people with knowledge of AI data deals, including current and former executives at companies involved, lawyers and consultants, to provide the first in-depth exploration of this fledgling market – detailing the types of content being bought, the prices materializing, plus emerging concerns about the risk of personal data making its way into AI models without people’s knowledge or explicit consent.

OpenAI, Google, Meta, Microsoft, Apple and Amazon all declined to comment on specific data deals and discussions for this article, although Microsoft and Google referred Reuters to supplier codes of conduct that include data-privacy provisions.

Google added that it would “take immediate action, up to and including termination” of its agreement with a supplier if it discovered a violation.

Many major market research firms say they have not even begun to estimate the size of the opaque AI data market, where companies often don’t disclose agreements. Those researchers who do, such as Business Research Insights, put the market at roughly $2.5 billion now and forecast it could grow close to $30 billion within a decade.

GENERATIVE DATA GOLD RUSH

The data land grab comes as makers of big generative AI “foundation” models face increasing pressure to account for the massive amounts of content they feed into their systems, a process known as “training” that requires intensive computing power and often takes months to complete.

Tech companies say the technology would be cost-prohibitive if they couldn’t use vast archives of free scraped web page data, such as those provided by non-profit repository Common Crawl, which they describe as “publicly available.”

Their approach has nonetheless drawn a wave of copyright lawsuits and regulatory heat, while prompting publishers to add code to their websites to block scraping.

In response, AI model makers have started hedging risks and securing data-supply chains, both through deals with content owners and via a burgeoning industry of data brokers that has popped up to satisfy demand.

In the months after ChatGPT debuted in late 2022, for instance, companies including Meta, Google, Amazon and Apple all struck agreements with stock image provider Shutterstock to use hundreds of millions of images, videos and music files in its library for training, according to a person familiar with the arrangements.

The deals with Big Tech firms initially ranged from $25 million to $50 million each, though most were later expanded, Shutterstock’s Chief Financial Officer Jarrod Yahes told Reuters. Smaller tech players have followed suit, spurring a fresh “flurry of activity” in the past two months, he added.

Yahes declined to comment on individual contracts. The Apple agreement, and the size of the other deals, haven’t previously been made public.

A Shutterstock competitor, Freepik, told Reuters it had struck agreements with two large tech companies to license the majority of its archive of 200 million images at 2 to 4 cents per image. There are five more similar deals in the pipeline, said CEO Joaquin Cuenca Abela, declining to identify buyers.

OpenAI, an early Shutterstock customer, has also signed licensing agreements with at least four news organizations, including The Associated Press and Axel Springer. Thomson Reuters, the owner of Reuters News, separately said it has struck deals to license news content to help train AI large language models, but didn’t disclose details.

‘ETHICALLY SOURCED’ CONTENT

An industry of dedicated AI data firms is emerging too, securing rights to real-world content like podcasts, short-form videos and interactions with digital assistants, while also building networks of short-term contract workers to produce custom visuals and voice samples from scratch, akin to an Uber-esque gig economy for data.

Seattle-based Defined.ai licenses data to a range of companies including Google, Meta, Apple, Amazon and Microsoft, CEO Daniela Braga told Reuters.

Rates vary by buyer and content type, but Braga said companies are generally willing to pay $1 to $2 per image, $2 to $4 per short-form video and $100 to $300 per hour of longer films. The market rate for text is $0.001 per word, she added.

Images of nudity, which require the most sensitive handling, go for $5 to $7, she said.

Defined.ai splits those earnings with content providers, Braga said. It markets its datasets as “ethically sourced,” as it obtains consent from people whose data it uses and strips out personally identifying information, she added.

One of the firm’s suppliers, a Brazil-based entrepreneur, said he pays owners of the photos, podcasts and medical data he sources about 20% to 30% of total deal amounts.

The priciest images in his portfolio are those used to train AI systems that block content like graphic violence barred by the tech companies, said the supplier, who spoke on condition his company wasn’t identified, citing commercial sensitivity.

To fulfill those requests, he obtains images of crime scenes, conflict violence and surgeries – mainly from police, freelance photojournalists and medical students, respectively – often in places in South America and Africa where distributing graphic images is more common, he said.

He said he has received images from freelance photographers in Gaza since the start of the war there in October, plus some from Israel at the outset of hostilities.

His company hires nurses accustomed to seeing violent injuries to anonymize and annotate the images, which are disturbing to untrained eyes, he added.

‘I WOULD FIND IT RISKY’

While licensing could resolve some legal and ethical issues, resurrecting the archives of old internet names like Photobucket as fuel for the latest AI models raises others, particularly around user privacy, according to many of the industry players interviewed.

AI systems have been caught regurgitating exact copies of their training data, spitting out, for example, the Getty Images watermark, verbatim paragraphs of New York Times articles and images of real people. That means a person’s private photos or intimate thoughts posted decades ago could potentially wind up in generative AI outputs without notice or explicit consent.

Photobucket CEO Leonard says he is on solid legal ground, citing an update to the company’s terms of service in October that grants it the “unrestricted right” to sell any uploaded content for the purpose of training AI systems. He sees licensing data as an alternative to selling ads.

“We need to pay our bills, and this could give us the ability to continue to support free accounts,” he said.

Defined.ai’s Braga said she avoids acquiring content from “platform” companies like Photobucket and prefers to source social media photos from influencers who create them, who she said have a clearer claim to licensing rights.

“I would find it very risky,” Braga said of platform content. “If there’s some AI that generates something that resembles a picture of someone who never approved that, that’s a problem.”

Photobucket is not alone among platforms in embracing licensing. Tumblr’s parent company Automattic said last month it was sharing content with “select AI companies.” In February, Reuters reported Reddit struck a deal with Google to make its content available for training the latter’s AI models.

Ahead of its initial public offering in March, Reddit disclosed that its data-licensing business is the subject of a U.S. Federal Trade Commission inquiry and acknowledged it could fall foul of evolving privacy and intellectual-property regulations.

The FTC, which warned businesses in February against retroactively changing terms of service for AI usage, declined to comment on the Reddit inquiry or say whether it was looking into other training data deals.

(Reporting by Katie Paul in New York and Anna Tong in San Francisco; Additional reporting by Krystal Hu in New York; Editing by Kenneth Li and Pravin Char)

Comments